AI transcription tools have quickly become essential for modern workflows—from meetings and interviews to lectures and research. But as adoption grows, so does a critical concern:

Is AI transcription actually safe?

The answer isn’t as simple as yes or no. It depends heavily on how the tool is built, where your data goes, and who has access to it.

In this guide, we’ll break down the real privacy risks behind AI transcription—and what you should look for if security matters to you.

How AI Transcription Actually Works

To understand the risks, you first need to understand how most transcription tools operate.

The majority of AI transcription apps today rely on cloud-based processing. This typically involves:

1. Recording audio

2. Uploading it to a remote server

3. Processing it using AI models

4. Returning the transcript

While this enables powerful features and high accuracy, it also introduces multiple layers of exposure.

The Hidden Privacy Risks of AI Transcription Tools

1. Data Upload & Storage

When your audio is uploaded, you often lose full control over:

• Where it’s stored

• How long it’s kept

• Whether it’s used for model training

Even if companies claim encryption, the data still exists outside your device.

2. Third-Party Processing

Many transcription tools rely on third-party APIs or infrastructure providers.

This means your data may pass through multiple systems before you receive your transcript.

·More systems = more potential vulnerabilities

3. Sensitive Content Exposure

For certain use cases, this becomes a serious issue:

• Business meetings with confidential information

• Interviews with private sources

• Medical or legal conversations

In these scenarios, even small risks can be unacceptable.

4. Compliance & Regulation Challenges

Industries like healthcare and finance must comply with strict data regulations (e.g., HIPAA, GDPR).

Cloud-based AI transcription tools may not always meet these requirements—or may require expensive enterprise plans to do so.

What “Safe AI Transcription” Really Means

Many tools market themselves as “secure,” but true safety comes down to architecture—not marketing.

A genuinely secure transcription software should provide:

✅ Local Processing (On-Device AI)

Audio is processed directly on your device, not uploaded by default.

✅ User-Controlled Cloud Usage

If cloud features exist, they should be optional—not mandatory.

✅ Transparent Data Policies

Clear information on storage, deletion, and usage.

✅ Minimal Data Exposure

No unnecessary data collection or retention.

Cloud vs Local: A Critical Comparison

| Feature | Cloud-Based Tools | Local-First Tools |

| Data Upload | Required | Optional |

| Privacy Risk | Medium–High | Low |

| Offline Use | No | Yes |

| Speed | Network dependent | Device dependent |

| Control | Limited | High |

The Shift Toward Local-First AI

In recent years, a new category of AI transcription tools has emerged: local-first AI applications.

Instead of sending your audio to the cloud, these tools:

• Process everything on your device

• Keep recordings local

• Allow optional cloud features only when needed

This approach significantly reduces privacy risks and aligns better with modern expectations around data ownership.

Real-World Example: Privacy Transcription

Some newer tools are already adopting this model.

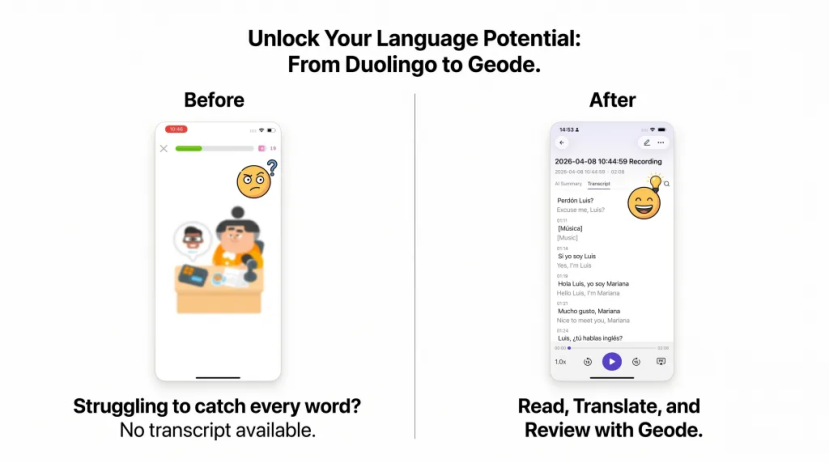

For example, Geode is designed around a local-first workflow:

• Record conversations directly on your device

• Transcribe audio without mandatory uploads

• Generate summaries locally

• Use cloud processing only when explicitly enabled

For users concerned about AI transcription privacy, this kind of architecture offers a much stronger level of control.

When Should You Care About Transcription Privacy?

Not every use case requires maximum security—but many do.

You should prioritize privacy if you are:

• Handling confidential business discussions

• Conducting interviews or research

• Working with sensitive personal data

• Operating under compliance requirements

Even for casual users, the trend is clear:

People increasingly prefer tools that don’t take unnecessary risks with their data.

Practical Tips to Stay Safe

If you’re currently using AI transcription tools, here are a few simple steps to reduce risk:

✔ Check where your data is processed

Look for transparency in documentation.

✔ Avoid automatic cloud uploads

Choose tools that give you control.

✔ Delete recordings after use

Especially for sensitive content.

✔ Consider offline-first tools

These provide the highest level of privacy.

The Future of AI Transcription

As AI becomes more integrated into daily workflows, privacy transcription expectations are rising.

We’re likely to see:

• More on-device AI processing

• Stricter data regulations

• Increased user demand for control

In this landscape, tools that prioritize privacy from the ground up will have a clear advantage.

Final Thoughts

So, is AI transcription safe?

It can be—but only if you choose the right kind of tool.

The biggest difference isn’t the interface or features. It’s where your data goes.

If privacy matters to you, moving toward local-first solutions is one of the smartest decisions you can make.